Modern corrections agencies sit at a unique intersection of public safety, rehabilitation, and government accountability. At the Virginia Department of Corrections (VADOC), harnessing operational data is not simply a technology initiative — it is foundational to reducing recidivism, improving facility safety, and delivering better outcomes for incarcerated individuals and the communities they return to. Sylvester Hubbard III, Director of Enterprise Application Services, offers a frank, practitioner-level look at what data-driven corrections actually means on the ground.

A Data Landscape Unlike Any Other

VADOC manages an extraordinary volume and variety of operational data. Like any large government agency, it runs HR, payroll, inventory, and asset management systems. But corrections adds layers that few agencies face: security sensor data from 41 facilities across Virginia, feeds from approximately 15,000 cameras statewide, body-worn device logs, Taser deployment records, license plate recognition systems, and a core Offender Management System (OMS) that anchors everything.

Then there is reentry data — records of inmate training programs, educational participation, vocational skill development, and other rehabilitative interventions designed to reduce the likelihood of reoffending.

"We have security data, reentry data, HR data, operations data — and they all live in silos. Our challenge is cross-pollinating that data in a way that lets us make critical, strategic decisions."

This combination of administrative, security, and rehabilitative data creates both enormous potential and significant complexity. The challenge, as Hubbard describes it, is not collecting data — it is connecting it.

The Silo Problem: Data Without Context

Like most large agencies, VADOC operates with entrenched data silos. Security teams protect their operational intelligence. HR guards personnel records. Reentry program staff manage program data. Operations maintains device and asset information. Each unit has legitimate reasons to be careful with its data — and legitimate concerns about how that data might be used.

The real problem, Hubbard explains, is that meaningful insight requires correlation across these domains. Understanding how an in-facility incident relates to an inmate's program participation history, the administrative context of the supervising officer, and broader facility trends — that kind of analysis demands data that no single silo can provide.

This is not a VADOC-specific challenge. Any organization managing complex, multi-stakeholder operations faces the same tension: data is most valuable when integrated, but integration requires trust, governance, and significant technical infrastructure.

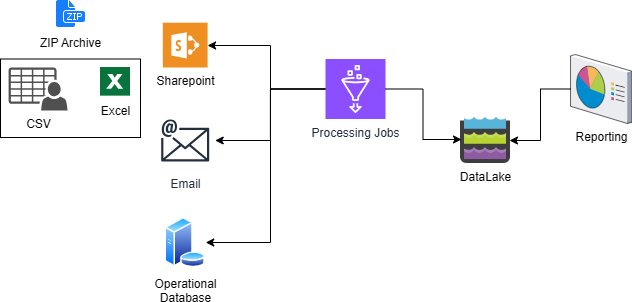

From Data Pond to Data Lake: Building the Foundation

VADOC currently operates what Hubbard candidly calls a "data pond" rather than a true data lake — structured data drawn from OMS, a vendor management system, and a handful of smaller systems. It is a starting point, not a destination.

From that foundation, the team maps, labels, cleanses, and summarizes data into structured datasets that power reporting, Power BI dashboards, data extractions, and early-stage predictive analysis. The agency maintains over 400 custom reports built on OMS alone — reports developed over time in response to operational needs, with approximately 80 new reports added each year.

"We can't eat the elephant all at once. The approach is to start with your data model, label it, map it — and then build from there."

The path forward involves expanding the data lake incrementally, beginning with structured data and progressively incorporating more complex unstructured and real-time data streams — the high-volume, high-velocity security data that arrives continuously from sensors, cameras, and field devices.

Real-Time Doesn't Always Mean Instantaneous

In discussions of data modernization, "real-time" can mean different things. For VADOC, Hubbard reframes the concept around accessibility rather than latency. Real-time reporting is reporting that is available, accessible, and mobile — meaning staff can retrieve the information they need from a smartphone with the same ease as from a desktop workstation.

This mobility-first ambition is driving a broader IT strategy: mobilizing applications wherever technically feasible, so that officers and managers in the field have the same data access as colleagues at headquarters.

True transactional real-time reporting exists — incident logs update as events occur. But the broader goal is ensuring that decision-relevant information is never locked behind a desk-only interface.

The Recidivism Challenge: Measuring What Matters

Virginia currently holds one of the lowest recidivism rates in the nation, sitting at approximately 19% — second only to South Carolina. That figure reflects years of investment in rehabilitation programming and reflects the outcomes that data-driven corrections is ultimately meant to support.

But measuring the drivers of that success is notoriously difficult. VADOC runs a wide range of programs: vocational training, IT skills courses, educational programs, and more. Attributing changes in recidivism to any specific intervention is, as Hubbard puts it, like being a geologist — you drill for oil, but the well doesn't come in for years.

"We're like Dr. Who — there's a wall of knobs, and we're twisting them without knowing which twist actually moved the needle on recidivism."

The gap between program completion and outcome measurement is a structural challenge. An inmate who completes a five-week IT training program may be released, supervised by probation and parole, and transition into employment or back into the justice system — but tracking that arc requires sustained inter-agency data sharing and longitudinal follow-up that current systems do not yet fully support.

Hubbard identifies three pillars for addressing recidivism: teamwork (coordinated effort across departments and agencies), technology (the right tools applied with strategic intent), and metrics (the means to connect interventions to outcomes). None of the three works without the others.

AI as an Accelerant, Not a Replacement

Hubbard is direct about AI's role at VADOC: it belongs wherever it can be usefully applied. That is not a blanket endorsement of automation — it is a recognition that AI-augmented tools are already embedded in the systems the agency uses, and the question is how to deploy them thoughtfully.

In security operations, AI capabilities are already visible: camera systems that flag groups loitering longer than a set threshold, automated object detection for specific items or behaviors, and multi-camera tracking that follows an individual across a facility without manual operator input.

In administrative operations, the opportunity Hubbard highlights is incident reporting. Today, multiple officers responding to the same event may each file separate reports that a supervisor reviews in isolation, without visibility into the others. An AI layer over incident documentation — something closer to a structured, voice-enabled input tool that captures observations in the moment — could improve report completeness, flag discrepancies, and consolidate supervisor review.

The principle Hubbard applies is one that holds across public sector contexts: technology augments human judgment; it does not replace it. The goal is not to automate decisions but to make the people making those decisions better informed.

Innovation as Incremental Progress

One of the most useful reframes Hubbard offers is his definition of innovation. Drawing on a principle from graduate school, he describes innovation as anything that makes something better — not a single transformative breakthrough, but a continuous accumulation of improvements. The comparison to Steph Curry's three-point shooting is apt: the visible result reflects thousands of unseen repetitions.

Applied to government data strategy, this means resisting the pressure to deliver a fully realized data lake before extracting any value. It means mobilizing one application at a time, adding one data source at a time, retiring one obsolete report at a time.

It also means being honest about limitations. VADOC is not yet where it wants to be. The data pond needs to become a lake. The 400-plus reports need to be audited and rationalized. Cross-agency data sharing needs governance frameworks that respect both utility and privacy. None of that happens overnight — but all of it happens through deliberate, sustained effort.

The Human Constant

VADOC operates under a set of organizational values its director describes as five pillars: humility, passion, unity, servanthood, and thankfulness. Hubbard closes his remarks by returning to these — not as a rhetorical gesture, but as a substantive point about the limits and purpose of technology.

Corrections is, at its core, a human enterprise. The data, the dashboards, the AI-assisted cameras, and the predictive models exist to support people — officers, case managers, program instructors, and the incarcerated individuals working toward reentry. Technology that forgets that purpose risks optimizing for the wrong outcomes.

"All of this technology, all the AI and all those other things, cannot replace you. The only thing they can do is help you do your job better."

That framing should resonate beyond corrections. Across government, the agencies making the most effective use of data are those that treat it as a resource for their people, not a substitute for them.

Key Takeaways for Government Data Leaders

- Start with a data model, not a data lake. Build your foundation on structured, well-mapped data, then expand incrementally.

- Cross-pollinating siloed data is the central challenge — and the central opportunity — in complex government agencies.

- Define real-time by accessibility, not just latency. Mobile-first data access can deliver significant operational value before full real-time infrastructure is in place.

- Recidivism and other long-cycle outcomes require longitudinal, cross-agency data strategies that most agencies have not yet built.

- AI is most effective as an augment to human decision-making, not a replacement for it — especially in high-stakes public safety contexts.

- Innovation is incremental. Accumulating small improvements consistently outperforms waiting for transformative overhaul.

- Report debt is real. 400+ reports growing at 80 per year without regular rationalization creates technical and operational drag.

Help your peers

Share what you've learned with fellow public servants