Singapore has not “achieved Gov 4.0.” But it is doing something arguably more important: putting the principles, platforms, and guardrails in place that make connected, agentic government possible.

Gov 4.0 is not a badge you earn by launching a chatbot or adopting a new model. It is an operating model shift, where government can coordinate across silos, respond in real time, and safely delegate bounded tasks to AI-enabled systems, while maintaining public trust and human accountability.

Singapore’s recent work across national AI strategy, public-sector AI adoption, and agentic AI governance signals a clear direction of travel: connected government, with increasing “agency” in digital services, under strong controls.

From digital government to Gov 4.0: what “agent-ready” really means

In a Gov 4.0 world, citizens should not have to understand which agency owns a service, which form to use, or which policies apply. The system should be able to:

- interpret intent,

- draw on the right information securely,

- coordinate across agencies,

- and execute clearly bounded actions with oversight.

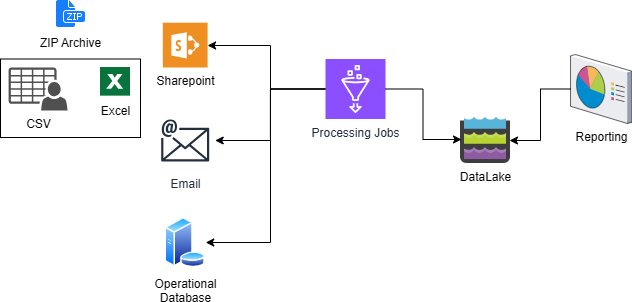

This is where the idea of agentic AI becomes relevant. AI agents can plan across multiple steps and take actions on behalf of users. That capability is powerful, but it also raises the stakes: agents may touch sensitive data, trigger real-world changes, or unintentionally take incorrect actions if not properly governed.[2]

Singapore’s approach suggests an emerging principle for Gov 4.0:

Capability must rise with accountability.

A practical stepping stone: moving government chat experiences toward LLM engines

One visible marker of the shift is the move to transition existing government chat experiences to large language model (LLM) engines (by end-2023, per your text). The potential upside is significant: faster and more natural service interactions, more personalised responses, and reduced friction in government–citizen communication.

But through a Gov 4.0 lens, this should be framed carefully.

LLM-powered chat is best understood as a front-end accelerator. It can dramatically improve the interface. It can help people get answers faster. It can guide citizens through processes more intuitively.

What it does not automatically deliver is the deeper Gov 4.0 outcome: connected execution across government. That requires the harder work behind the interface: interoperable services, trustworthy data-sharing pathways, strong identity and access controls, operational resilience, and governance that can keep pace with increasing autonomy.

NAIS 2.0: shifting from AI “projects” to AI “systems”

Singapore’s National AI Strategy 2.0 (NAIS 2.0) explicitly signals a shift from “Projects to Systems” so that AI impact is not limited to isolated pilots. It lays out a national approach to scaling AI through three “Systems,” supported by enablers.

For Gov 4.0 readiness, three ideas stand out:

- Whole-of-government uplift, not just agency-by-agency tools, including functional domains like service delivery and productivity.

- People and capability building, so AI is used confidently and safely across the public service, not only by specialist teams.

- A trusted environment, where safety, reliability, and security are treated as foundations for adoption, not afterthoughts.

This is how governments become agent-ready: not by buying a single solution, but by building national capacity that can support new service models at scale.

IMDA’s Agentic AI Governance Framework: designing guardrails before scaling autonomy

If Gov 4.0 is the destination, agentic AI is one possible vehicle. IMDA’s Model AI Governance Framework for Agentic AI (2026) reads like a blueprint for how to responsibly move toward that future.

It emphasises that humans remain ultimately accountable, and it highlights the distinct risks of agentic AI, including unauthorised or erroneous actions and automation bias.

The framework’s recommendations can be cleanly translated into Gov 4.0 principles, such as:

- Bounded autonomy: limit what systems can do, where they can act, and what they can access.

- Meaningful human checkpoints: define where human approval is required before high-impact actions.

- Lifecycle assurance: baseline testing, controlled access to tools, and ongoing governance throughout deployment.

- End-user responsibility: transparency, education, and training so users understand system limits.

This is not “innovation theatre.” It is practical scaffolding for scaling AI in ways that remain safe and trusted.

Why this matters: AI for public good depends on trust, not just capability

Your text makes the key point: GovTech’s AI and data science initiatives show how data can improve public services and support societal wellbeing.

Through this lens, Singapore’s path to Gov 4.0 is best framed as a deliberate effort to make sure AI delivers public value without eroding trust. The ambition is not only smarter government services, but:

- services that are easier to access and navigate,

- decisions that remain accountable,

- systems that are resilient under attack,

- and AI that is deployed in ways that protect people, not merely processes.

In other words, Singapore is not claiming Gov 4.0 maturity. It is engineering the preconditions: systems thinking, national infrastructure, talent, and governance guardrails that make connected, agentic government feasible.

Help your peers

Share what you've learned with fellow public servants